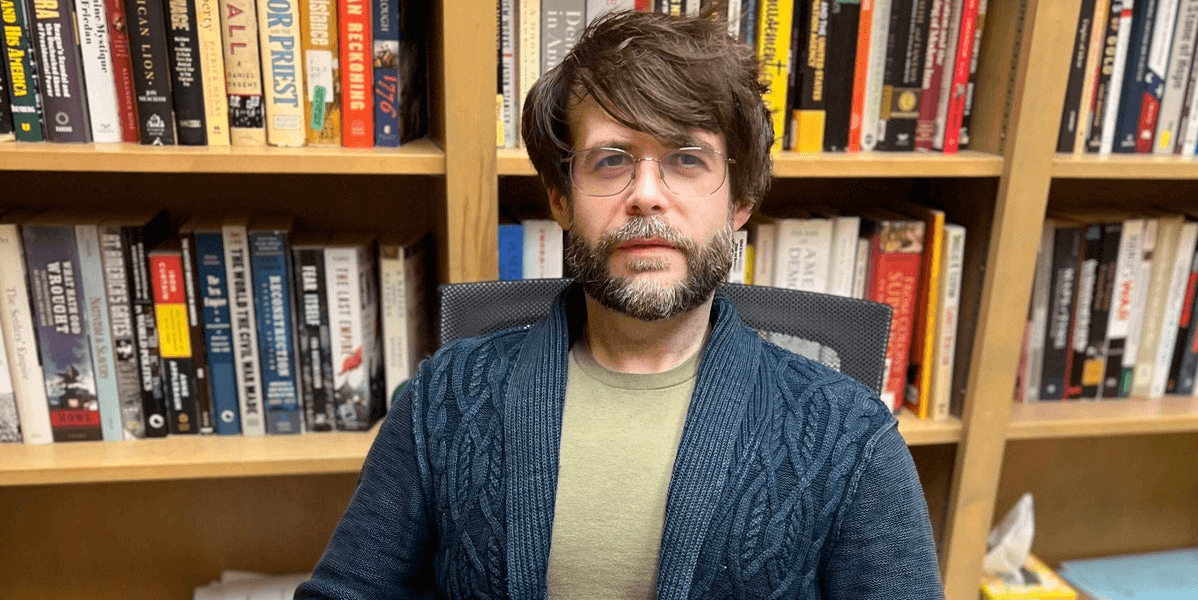

A professor used prompt injection to catch students cheating with AI.

This morning I posted about indirect prompt injection. Attackers hiding invisible instructions in web pages to hijack AI agents.

Same technique. Different story.

A history professor at a small university embedded hidden text inside a student assignment. Instructions invisible to the human eye but readable by ChatGPT. He asked it to write the essay from a Marxist perspective, a frame completely out of place for a book about an 1800 slave rebellion.

39% of his 122 students submitted AI-generated work. Every one of them followed the hidden instruction without question. Not one could explain what Marxism was when confronted.

The mechanism is identical to what attackers use against AI agents. Hide an instruction. The AI reads it. The AI follows it. The human never notices.

Two sides of the same coin. Attackers use it to hijack your AI systems. A professor used it to expose students who surrendered their thinking to AI.

Want longer reads on these topics?

Insights covers the same topics in depth: research-backed analysis on AI, value creation, and building companies.

Read Zaruko Insights